Dashboard

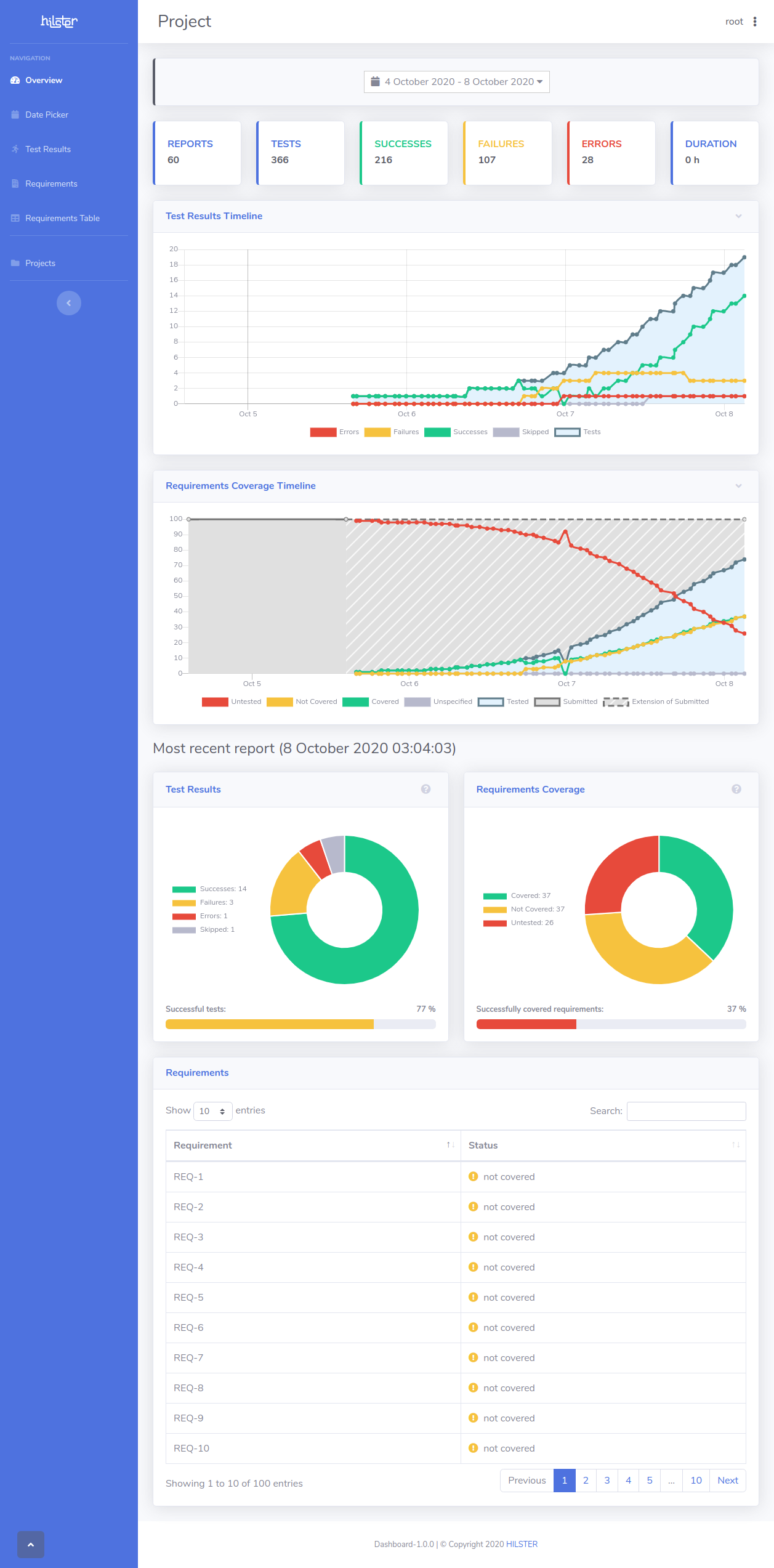

The HILSTER Dashboard is an interactive analytics tool for test results during development.

Fully integrated into QABench, test reports can be sent automatically to the Dashboard upon conclusion of a test run.

Additionally, JUnit-XML compatible reports generated by other testing suites can also be uploaded to allow for a comprehensive overview of the project’s current test status.

It serves users both in managing and developing roles, showing the current project status as well as changes over time.

Project requirements can be supplied to the Dashboard enabling users to see whether requirements are already covered by tests.

You can find more information in the Dashboard documentation.

Starting the Dashboard

Dashboard requires the use of Docker and Docker Compose to run. It can be installed via your distribution’s repositories. As it is shipped as a Linux-image, you will need to run Linux-images on Docker for Windows or use a Linux-machine to run the Dashboard Demo.

To install Docker and Docker Compose please refer to the Docker documentation and Docker Compose documentation.

You also need Git.

First checkout the Docker Compose files from HILSTER’s GitHub.

git clone https://github.com/hilstertestingsolutions/dashboard-deployment.git

cd dashboard-deployment

Edit docker-compose.yml and fill in your activation-key.

Then run the dashboard

docker-compose up

You will now be able to navigate to http://localhost in your browser

to visit an empty dashboard.

The default username is root, and the default password is also root.

Importing a Project

First you have to login as root

dashboard login

Enter root as username and password.

Change directory to dashboard

cd dashboard

Create an empty project

dashboard create-project imported_project "Imported Project"

Then upload the exported project data by running

dashboard import-project imported_project project_export.zip

Now visit http://localhost/project/imported_project with your browser.

You might need to login with the same credentials used via command line.

Uploading Reports with HILSTER Testing Framework

Create another empty project

dashboard create-project example "Example Project"

If you reload the browser, you should now see the empty project

Example Project (http://localhost/project/example).

You might need to login with the same credentials used via command line.

Run test_example.py multiple times. Play around with the code.

htf test_example.py -r http://localhost/project/example

#

# Copyright (c) 2023, HILSTER - https://hilster.io

# All rights reserved.

#

import htf

import htf.assertions as assertions

successes = 1

failures = 1

errors = 1

skipped = 1

all_requirements = [f"REQ-{i}" for i in range(1, 10 + 1)]

current_requirements = all_requirements[:3]

failing_requirements = current_requirements[: len(current_requirements) // 3]

errored_requirements = current_requirements[: -len(current_requirements) // 2]

@htf.data(range(successes))

@htf.requirements(*current_requirements)

def test_success(i: int) -> None:

pass

@htf.data(range(failures))

@htf.requirements(*failing_requirements)

def test_failure(i: int) -> None:

assertions.assert_false(True, "This test fails!")

@htf.data(range(errors))

@htf.requirements(*errored_requirements)

def test_error(i: int) -> None:

raise Exception("This test has an error!")

@htf.data(range(skipped))

def test_skip(i: int) -> None:

raise htf.SkipTest("This test is skipped!")

if __name__ == "__main__":

htf.main(report_server="http://localhost/project/example")

Upload requirements to the project

dashboard upload-requirements example requirements_10.csv

Check the project page in the browser. Run the tests again multiple times if you wish.

You can also upload other requirements to see how the project changes.

Requirements Coverage using multiple tools

Assume you have a project which has 20 requirements. The first half is tested

with a unit testing framework that generates a JUnit-XML report (unittests.xml).

The other half is tested by integration tests using HILSTER Testing Framework.

You’d like to measure the requirements coverage over the whole project.

This is possible with HILSTER Testing Framework and Dashboard.

Create another project and upload the desired requirements.

dashboard create-project coverage_project "Coverage Measurement"

dashboard upload-requirements coverage_project requirements_20.csv

Create the integration test report

htf -H integrationtests.html integrationtests.py

Use the report-tool to combine both reports and to send them to the Dashboard.

report-tool unittests.xml integrationtests.html -r http://localhost/project/coverage_project

Now navigate to http://localhost/project/coverage_project to check the requirements coverage.

You can vary the scripts to see how the requirements coverage changes.